Computer vision

Computer vision is the flip side of computer graphics: we want our computers to be able to look at the world and understand what they are seeing. Here are several examples of that.

Webcams

Motion detection (from Daniel Shiffman's Learning Processing): This program uses Processing to display the live video feed from a webcam, and highlight the moving parts of the scene.

Face detection (a tutorial by Ana Huaman): This program finds faces in a webcam video feed. It uses a powerful library for 2D computer vision called OpenCV.

Face detection (a tutorial by Ana Huaman): This program finds faces in a webcam video feed. It uses a powerful library for 2D computer vision called OpenCV.

The Kinect sensor

The Kinect sensor belongs to a class of devices called depth cameras. These cameras can estimate the distance to objects in their field of view; thus they produce a 3D model of a scene, not just a 2D image. The Kinect works by projecting a complex pattern of infrared beams onto the scene, and observing where these beams land, using an infrared camera that is offset to the side of the projector. This arrangement is somewhat like stereo vision, and it allows the Kinect to perceive depth.

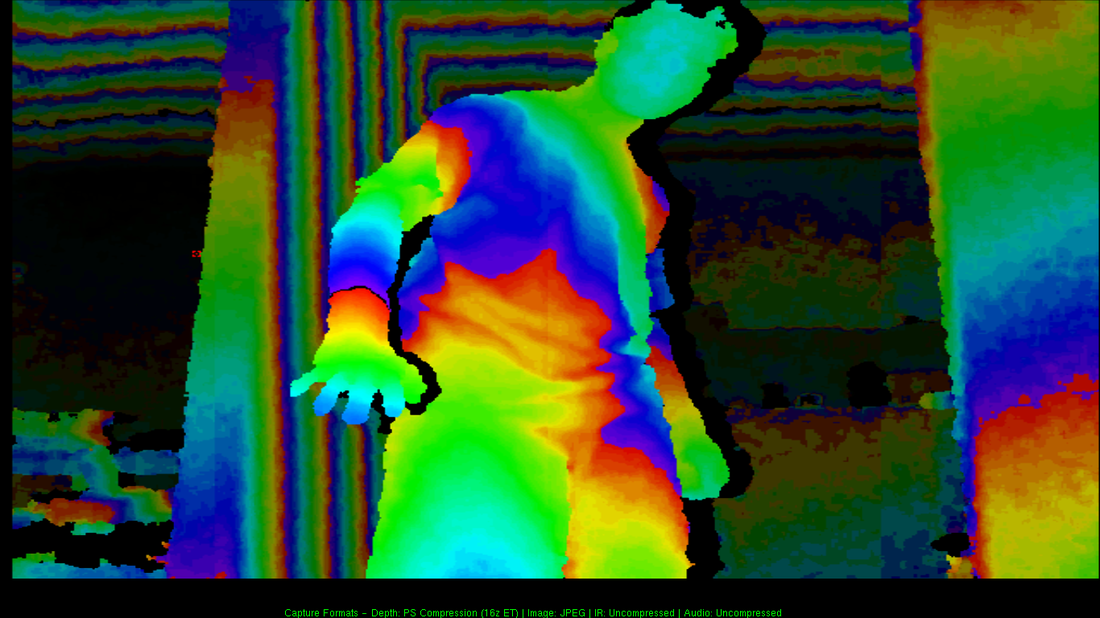

Below is an example of the Kinect's depth image. Objects at different distances from the camera are shown in different colors; as one moves from near to far-away objects, the colors cycle through the rainbow several times. This image was generated using the NiViewer utility, which is part of the OpenNI SDK.

Below is an example of the Kinect's depth image. Objects at different distances from the camera are shown in different colors; as one moves from near to far-away objects, the colors cycle through the rainbow several times. This image was generated using the NiViewer utility, which is part of the OpenNI SDK.

The Kinect is often used together with software for body tracking and pose estimation; that is, the software can recognize people, and their gestures and body movements. Two simple examples of this are hand tracking and center-of-mass tracking (from Greg Borenstein's book Making Things See). These programs are written in Processing, and use the OpenNI library, together with a Processing wrapper called simple-openni.

The raw data from the Kinect sensor takes the form of a point cloud, which can be used in many ways. The Point Cloud Library provides an array of tools for this purpose; one neat example is 3D object recognition.